Making the most of AI

Thoughts on how media and broadcast organizations can make the most out of AI.

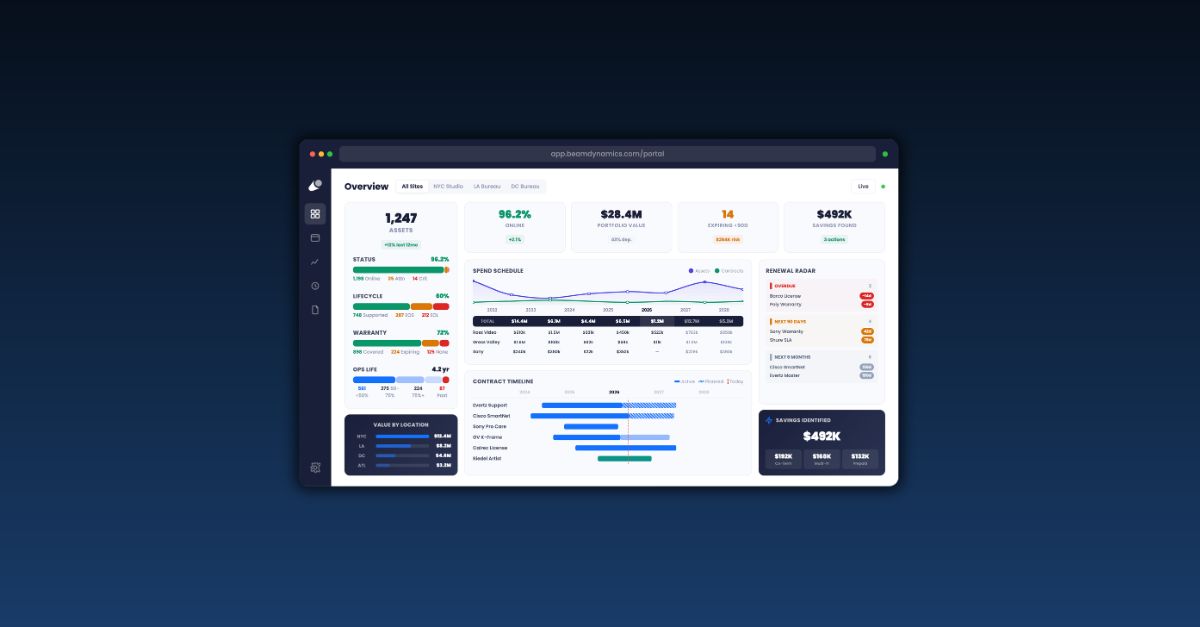

It seems like everyone is talking about artificial intelligence at the moment. Certainly for us at Beam Dynamics, AI is at the heart of what we do. We rely on it to pull together the information for our equipment lifecycle management technology.

Typically, when we talk about AI, we are talking about a “large language model” (LLM). In simple terms, this means a computer (actually, masses of parallel processes) has analyzed a very large volume of information and “learned” it, so it can make decisions and create new material based upon it.

ChatGPT is a large language model, based on GPT-4, the fourth generation solution from OpenAI. GPT in this context stands for “generative pre-trained transformer”, which is to say it knows a lot of information already and is ready to use. The pre-training uses both public data and data specifically licensed from third-party providers.

GPT is, in our opinion, the best LLM we have seen so far. But it is designed for widespread, even public use, so is a very broad model which means there is a lot of data we don’t care about.

ChatGPT, incidentally, is the name given to the consumer version, accessed through the simple web interface which many of you will already have tried. GPT is the underlying LLM, which we access through a more direct interface.

The problem with having such a vast amount of data is that a search is as likely to collect irrelevancies as the information you need. So you need to ask precisely the right question. This is called “prompt engineering”.

Getting this right has three sets of challenges. At the technical level, what the system is doing is developing weighted responses to solutions. Remember that, whatever we call it, this is not actually intelligence: the computer is looking at sequences of characters to determine what logically comes next in response to your request.

The second challenge is at the system level. Here the developer has to teach the application which questions to ask, as well as to parse the answers to see if they are relevant. If you ask “What is your favorite thing to eat?” you might get answers including “sushi; lobster; steak; asparagus; mushrooms; chocolate; ice cream”, but asking “What is your favorite dessert?” would return “ice cream”.

The third challenge is to frame your interactions with the LLM as efficiently as possible. When the charges are based on processing time, you need to get to the information you need as directly as possible.

One way to do this is to move away from the all-encompassing large language model, to an LLM which is trained on the specific data you are interested in. Foundational systems like MosaicML provide a development platform which allow you to train and build your own models. For an application like ours, where we want to understand all the information around a set of equipment, there are great advantages to working in a specially-trained environment.

That is particularly true when you add functionality like tracking service tickets. First, you want users to report problems in their own words, then have the application use fuzzy logic to determine what the problem is. Subsequently, you want the user’s description to add to the LLM, because the operator’s language is likely to reflect how the equipment is used, and therefore how to prepare for future issues and develop strategies against failures.

To build a system that delivers real value to the enterprise requires a mix of techniques, including access to the best LLMs; the development of the application’s own knowledge base; plus the ability to do web scrapes when necessary; and just sometimes a little human input.

To find out more about Beam's Asset Intelligence™, please visit www.beamdynamics.io

Other articles

Learn more about technology management, industry tips, product news and more.